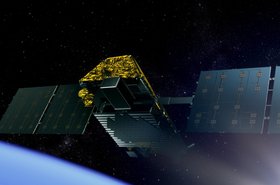

Last year, HPE and NASA teamed up to send a small supercomputer to the International Space Station.

The project's goal was simple - to see if the system could survive a year, roughly the time it would take to fly to Mars. The project has proved an unmitigated success, HPE's Dr Mark Fernandez told DCD, in an interview discussing the space system's future.

Above the cloud

"We can't believe it," Fernandez, HPE's Americas HPC technology officer and Spaceborne Computer payload developer, said. "Our experiment was set to last a year, where we ran stress-inducing benchmarks of various types to prove that the system can function in the harsh conditions on the ISS. It's been a 100 percent success."

With risk factors including radiation, solar flares, subatomic particles, micrometeoroids, unstable electrical power and irregular cooling, most hardware on the International Space Station is hardened, which increases costs and weight. The Spaceborne Computer, on the other hand, tested out the concept of software hardening "because software doesn't weigh anything," Fernandez said.

"The hardware is straight off the factory floor," and is simply a two server HPE Apollo 40 system, only slightly changed to fit in a NASA locker, rather than a 19" rack. "We've got 32 cores and a whole bunch of solid state disks, a whole bunch of memory and depending on who we ask we're 30 times to a 100 times faster than anything else on board the ISS. And we've lasted longer than any IT on board that is not hardened for that mission."

It uses a rear door water cooling system to keep the supercomputer chilled, and relies on power from the ISS' solar panels. "We are the most energy efficient computer anywhere in existence on Earth - or elsewhere - because we get free electricity from the solar cells of the ISS and we get free cooling from the coldness of space. So it costs nothing to operate," Fernandez said.

"I jokingly say that as soon as Jeff Bezos can figure out how to get his data center in space, it will be there because OpEx often exceeds CapEx and OpEx is pretty low in terms of power and cooling up there: it's zero."

The year did not pass without trouble, however. "I have had a full range of interrupts," Fernandez said excitedly. "I have had an unscheduled interrupt due to a hardware failure - not mine, a NASA hardware failure - I have had an unscheduled interrupt due to human error. I have had an unscheduled network interrupt due to a hardware failure and I have had a scheduled interrupt due to a maintenance event. So I've got the full gambit of events that we would experience on an earthbound data center and we've successfully navigated them - it's pretty exciting."

With the system far from any trained data center engineers, the company also had to change how it approaches maintenance. "We had a power supply failure but we have redundant power supplies, which are called a customer replaceable unit (CRU). But our procedure for replacing a power supply, a CRU procedure, was totally inappropriate for use in space. We had to rewrite it and it's gone through NASA and met their approvals etc. So we have an astronaut replaceable unit (ARU) procedure documented and ready to go."

While the main purpose of the mission was to test similar system's feasibility in a long Mars voyage, where travelers cannot turn around if hardware fails, the ISS itself has some need for onboard computing. "We think that the ISS is not that far away, that because it's going around the Earth we're in constant communication with it," Fernandez said. "In fact when it's near the northern and southern poles it cannot reach the satellites for communication and we have what they call an LOS, a loss of signal. This occurs many times per day and it can last from two to three seconds up to 20 to 30 minutes."

This has meant that HPE had to design the software to make the system as self-sufficient as possible, unable to rely on the luxury of constant connection, which terrestrial data center operators have gotten used to.

"Over the past few years and decades we've dumbed down the computing nodes and put all that intelligence into the data center environmental systems," Fernandez said. "We had to turn that model upside down and put some of that stuff back in to the nodes because we're out of communication often. It has on board self-care, fault management, and if it can't phone home, it says 'okay I'm going to follow my procedures and save myself.'"

Developing this process led to innovations that could prove useful back on Terra Firma, HPE believes. "We have seven invention disclosures that are going through the internal HPE process of seeing if they are worthy of actual patents," Fernandez said. "They are also going through engineering review."

The original experiment may have come to an end, but HPE now finds itself with a few extra months to test the system. "There's only two ways to leave the International Space Station: One is you get in the Russian trash can and they launch it and it burns up in the atmosphere - the vast majority of folks thought that this would never ever work, and we were mentally prepared to have our baby be put in the trash can and have it burn up on re-entry and it be done," Fernandez said

"But NASA and HPE can clearly articulate the value so we're going to come back the second way. That's what's called 'down mass,' NASA made the investment in 'up mass' to get us there, and now they will invest in 'down mass' to get us back because it's a value for us to do our product failure analysis on all the components and judge whether they look a year old or 10 years old."

With a Russian Soyuz rocket carrying astronauts having failed mid-flight last month (the astronauts escaped alive), ISS flights have been delayed. The return flight for HPE's supercomputer has now been set as February/March 2019, giving the company a few extra months to use it in space. "So we're opening up these compute capabilities to researchers, explorers etc, on Earth and on board that need to test some of their software in space," Fernandez said.

"We also think that it's going to open up some other opportunities if we can free up some downlink bandwidth by doing the processing of the massive amounts of data that are collected on ISS on board at the Edge. That should allow more experiments to be operating on the ISS because we've freed up that bandwidth.

"And that's possibly opening up some new frontiers in space."