Nvidia has unveiled the H200 GPU, its latest high-end GPU.

The H200 features 141GB of HBM3e and a 4.8TBps memory bandwidth, a significant improvement over the H100's 80GB of HBM3 and 3.5TBps of memory bandwidth. The GPUs are otherwise identical.

“To create intelligence with generative AI and HPC applications, vast amounts of data must be efficiently processed at high speed using large, fast GPU memory,” Ian Buck, vice president of hyperscale and HPC at Nvidia, said.

“With Nvidia H200, the industry’s leading end-to-end AI supercomputing platform just got faster to solve some of the world’s most important challenges.”

H200-powered systems are expected to begin shipping in the second quarter of 2024. Cloud providers AWS (Amazon Web Services), Google Cloud, Microsoft Azure, Oracle Cloud Infrastructure, CoreWeave, Lambda, and Vultr will deploy H200-based instances starting next year.

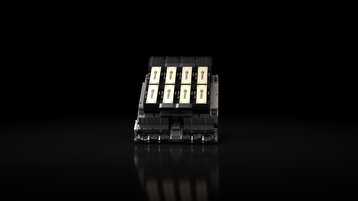

The GPU will be available in HGX H200 server boards with four- and eight-way configurations, which are compatible with both the hardware and software of HGX H100 systems.

Server makers including ASRock Rack, ASUS, Dell Technologies, Eviden, Gigabyte, Hewlett Packard Enterprise, Ingrasys, Lenovo, QCT, Supermicro, Wistron, and Wiwynn will be able to update their existing systems with an H200.

H200 is part of the previously announced second-generation Grace Hopper Superchip, the GH200. That product combines the H200 with its 72-core, Arm-based Grace CPU and 480GB of LPDDR5X memory on an integrated module.