In the recently published 2019 Uptime Institute supplier survey, participants told us they are witnessing higher than normal data center spending patterns. This is in line with general market trends, driven by the demand for data and digital services. It is also a welcome sign for those suppliers who witnessed a downturn two to three years ago, as public cloud began to bite.

The increase in spending is not only by hyperscalers known to be designing for 100x scalability and building for 10x growth. Smaller facilities (under 20MW) are also seeing continued investment, including in higher levels of redundancy at primary sites (a trend that may have surprised some).

Forecasting concerns

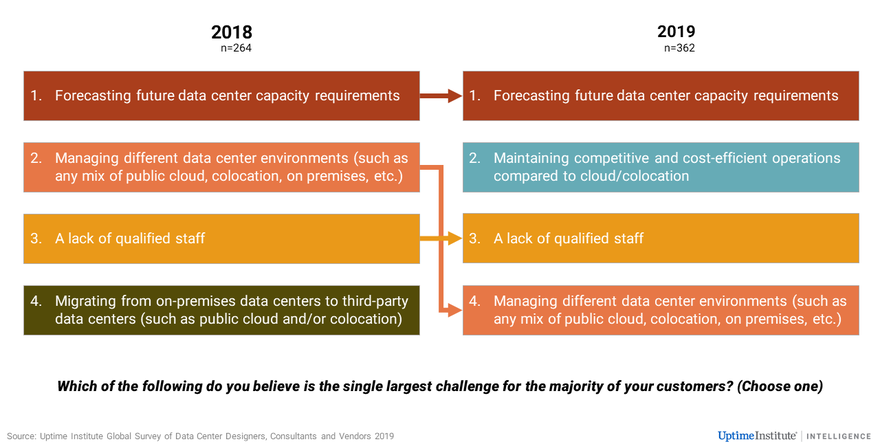

However, this growth continues to raise concerns. In this year’s survey, the top challenge operators face, as identified by suppliers, is forecasting future data center capacity requirements. This is followed by the need to maintain competitive and cost-efficient operations compared with cloud/colocation. Managing different data center environments dropped to fourth place, after coming second in last year’s supplier survey. This finding agrees with the results of our 2019 operator survey (of around 1,000 data centers operators around the world). In that survey, our analysis attributed the change to the advancement in tools and market maturity.

The figure below shows the top challenges operators faced in 2018 and 2019, as reported by their suppliers.

Forecasting data center capacity is a long-standing issue. Rapid changes in technology and the difficulty of anticipating future workload growth at a time when there are so many choices complicate matters. Overprovisioning capacity, the most commonly adopted strategy, leads to inefficiencies in operations (and unnecessary upfront investment). Against this, underprovisioning capacity is an operational risk and could also mean facilities reach their limit before their planned investment lifecycle.

Depending on the sector and type of workload, many organizations have adopted modular data center designs, which can be an effective way to alleviate the expense of overprovisioning. Where appropriate, some operators also move highly unpredictable or the most easily/economically transported workloads to public cloud environments. These strategies, plus various other factors driving the uptake of mixed IT infrastructures, mean more organizations are accumulating expertise in managing hybrid environments. This may explain why the challenge of managing different data center environments dropped to fourth place in our survey this year. Additionally, cloud computing suppliers are offering more effective tools to help customers better manage their costs when running cloud services.

The adoption of cloud-first policies by many operators means managers are having to demonstrate cost-effectiveness more than ever. This means that understanding the true cost of maintaining in-house facilities versus the cost of cloud/colocation venues is becoming more important, as the survey results above show.

The 2019 Uptime Institute operator survey also reflects this. Forty percent of participants indicated that they are not confident in their organization’s ability to compare costs between in-house versus cloud/colocation environments. Indeed, this is not a straightforward exercise. On the one hand, the structure of some enterprises (e.g., how budgets are split) makes calculating the cost of running owned sites tricky. On the other hand, calculating the true cost of moving to the cloud is also not straightforward. There may be costs inherent in the transition related to application re-engineering, potential repatriation or network upgrades for example (and there is now a vast choice of cloud offerings that require careful costing and management). Among other issues, such as vendor lock-in, this complexity is now driving many to change their policies to be more about cloud appropriateness, rather than cloud-first.

The full report Uptime Institute data center supply-side survey 2019 is available to members of the Uptime Institute Network here.