With the exponential growth of data usage in recent years, data centers are becoming more and more critical to everyday life. Whether it’s online gaming, remote schooling, virtual conferences, or basic shopping, billions of people access the internet every day. In 2020, Domo estimated the internet reaches 59 percent of the world’s population, or a whopping 4.57 billion people!

All of this internet usage requires significant data center capacity, where servers work 24x7 to route internet traffic and process transactions. Advancements in data center design, server technology, and software development have all contributed to more energy efficient data centers in recent years, leading some to suggest the global energy usage is growing at a much slower rate than anticipated just three to four years ago. However, data centers still consume a significant amount of the world’s energy, and thus the need to continue pursuing more energy efficient methods for operating the data centers of the future.

Fact: Servers generate heat, and this heat must be removed in order to maintain operation.

Looking for new ways to cool

Over the years, we’ve seen many methods for rejecting data center heat. As server technology evolved, traditional computer room air conditioning (CRAC) using direct expansion refrigeration evolved to more energy efficient cooling methods, including economizers, where outdoor air is used, either directly or indirectly, to cool data halls.

Many of these economizer solutions use water through evaporative cooling. While evaporative cooling can significantly enhance the efficiency of economizing options, water usage has become a major concern in the industry due to a growing shortage of water in many regions of the world. Many companies, such as Microsoft, have begun broad initiatives to become “water neutral” in an effort to drive down overall water usage in all of their facilities, including data centers.

While using natural resources such as outdoor air and water provide high energy efficiency, these resources also present challenges for companies operating data centers. As a result, data center designers have been rethinking their cooling strategies, often defaulting back to more traditional methods such as air-cooled chillers. Recent advancements in chiller technology offer energy savings, but is that enough, and do the savings justify the higher cost of installing chiller plants? Perhaps, we can use the principles of physics to find even more efficient methods for rejecting data center heat naturally, without the use of water or the need to introduce outdoor air into the data center.

Cooling a data center is all about heat transfer. Electronics (servers) generate heat. This heat must be removed from servers through a fluid (air or liquid) in order for the servers to operate. This can be accomplished through several methods, but could it be done naturally by simply using the forces of nature? For example, could the heat transport medium flow to externally mounted heat rejection devices without the use of power consuming mechanical devices?

Enter the thermosyphon

The answer is yes, through the driving force of phase change and gravity, also known as thermosyphon (or thermosiphon). Natural convection is a type of heat transfer, in which the fluid motion is not generated by an external source but rather by some parts of the fluid being heavier than other parts. In most cases this leads to natural circulation, or the ability of a fluid in a system to circulate continuously, propelled by the fluid changing phase from vapor to liquid, and by gravity.

Thermosyphons provide a method of passive heat exchange, based on natural convection, which circulates a fluid without the necessity of a mechanical pump. In other words, gravity does the work. Thermosyphons are not a new concept for cooling. They are widely used as a cooling method in many applications, including solar heating systems and chiller economizing.

So why not use thermosyphons in Data Centers?

Harnessing thermosyphons

It turns out experiments in thermosyphon cooling for data centers have been conducted over the past few years, most notably at a US National Renewable Energy Laboratory (NREL) data center. In February 2020, NREL reported saving 1.15 million gallons of water over a year by utilizing thermosyphon cooling at a data center in Golden, Colorado. However, until now, manufacturers of data center cooling solutions generally have not harnessed the benefit of thermosyphon’s, except for use in chiller based economizers, which have not proved to be that efficient.

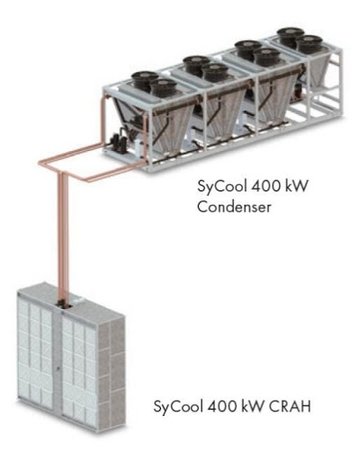

In 2018, Munters first introduced thermosyphon technology in a packaged air handler system for data center cooling, called SyCool. Since that time, Munters further developed the technology, through thousands of test hours, in a split system for deployment to data centers (see figure,). When compared to conventional dry mechanical cooling systems, Munters SyCool offers best-in-class energy savings, proving that thermosyphons can effectively be used to reduce the electrical energy required to reject data center heat without any use of water.

And the future?

As data centers increasingly become a part of the world’s critical infrastructure, industry leaders will strive to find methods for more efficient data center operation. Watt densities will continue to increase as server technology evolves and cooling methods may shift more toward liquid cooling as opposed to air cooling. As liquid cooling becomes more prevalent in the industry, thermosyphon technology can be utilized as a method to achieve high efficiency heat rejection. This can be achieved by coupling a liquid cooling system with a thermosyphon system through a refrigerant-to-liquid heat exchanger.

Sometimes the answer is simple, and by applying age old principles, we can create new innovations to solve some of our biggest challenges. When it comes to cooling data centers, thermosyphons provide the motive “force” to drive the next chapter in a sustainable future for data centers.