With worldwide spending on AI systems set to double between 2023 and 2026, it seems obvious that data center capacity would rapidly ramp up to meet demand.

Surprisingly, however, the past year has seen many data center operators hit the brakes on new projects and slow down on investment, with vacant capacity dropping by 6.3 percent in London during 2022-23.

What’s behind this counter-intuitive trend? To explain this, we need to understand some issues around AI compute and the infrastructure that supports it.

How AI changes data center infrastructure

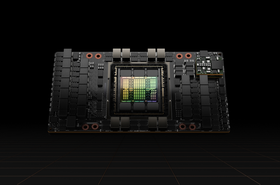

Data centers have historically been built around CPU-powered racks to tackle traditional computing workloads. However, AI compute instead requires GPU-powered racks, which consume more power, emit more heat, and occupy more space than an equivalent CPU capacity.

In practice, this means AI compute capacity will often require more power connections or alternative cooling systems.

Since this is embedded infrastructure, it’s built into the structure of a data center complex – making it often extortionately expensive to replace, if not outright economically impossible.

In practice, operators must commit to a “split” between how much space in their new data centers is dedicated to AI versus traditional compute.

Getting this wrong and overcommitting to AI could leave data center operators saddled with permanently underutilized and unprofitable capacity.

This problem is exacerbated by the AI market being in its infancy, with Gartner claiming that it’s currently at the peak of inflated expectations in the hype cycle. As a result, many operators are choosing to hold back at the design stage rather than commit prematurely to the proportion of AI compute in their new data center projects.

Taking a holistic approach at the design stage

Operators are keenly aware, however, that there is only so long they can risk delaying investment before losing market share and competitive edge. But given that many of the fundamentals of data center infrastructure are being rewritten in real-time, this is a tough task.

To balance the need to be first movers while offsetting risks, operators need to design their data centers to be maximally efficient and resilient in the era of AI compute. This requires a whole new and holistic approach to design.

- Involve more stakeholders

Regardless of the exact split present between AI and traditional compute that an operator decides on, data center sites with AI compute capacity promise to be significantly more complex than traditional facilities. More complexity often means more points of failure, especially since AI compute has significantly more demands than traditional compute.

As a result, to guarantee uptime and reduce the risk of costly problems during a site’s lifetime, teams need to be more thorough during the planning stages of data centers.

In particular, the design stage should seek input from a greater range of teams and expertise at the outset of projects. Alongside seeking expertise on power and cooling, designers should engage operations, cabling, and security teams from early on to understand potential bottlenecks or sources of faults

2. Build AI into data center operations

Since operators now have AI compute on site, they should use their capacity to leverage AI for new efficiencies in their operations. AI’s adoption in the data center has been a long time coming, with the technology able to undertake workflows with great precision and quality. For example, AI can help with:

- Temperature and humidity monitoring

- Security system operations

- Power usage monitoring and allocation

- Hardware fault detection and predictive maintenance

By using the technology proactively at every stage of the data center lifecycle, operators could dramatically improve the efficiency and robustness of their operations. AI is ideally suited to helping address newfound challenges in adopting the novel and complex layouts of these next-generation data centers, such as via fault detection and predictive maintenance.

3. Avoid false economies

AI places a greater load on data centers during peak times, such as during training runs or when running enterprise-grade models in production. During these periods, AI compute will often significantly exceed traditional expectations for power draw, cooling demand, and data throughput.

At the most basic level, this means greater strain on the underlying materials in a data center. If those underlying materials or components are not of high quality, this means they’ll be more prone to failure. Since AI compute means a dramatic increase in the number of components and connections at a site, this means cheaper and lower quality materials that would have functioned well in traditional sites can bring data centers running AI compute to a halt.

To this end, operators should avoid looking to save money by paying for lower-quality materials, such as substandard cables. Doing so risks a false economy, since these materials are more vulnerable to failure and need more frequent replacement. But, most problematically, the failure of substandard materials and components often results in downtimes or slowdowns for sites that impact their profitability.

Addressing the infrastructure conundrum

While the infrastructural requirements of AI compute may be the main reason operators are procrastinating on investment, this is not going to be the case over the long run.

As market uncertainty lifts, firms will converge toward their “Goldilocks Zones” regarding the split between traditional and AI compute in their data centers.

As this happens, firms will need to ensure they have every advantage possible in their site’s operations as they learn and mature.

That means designing holistically from the outset, leveraging AI itself to discover new efficiencies in their sites, and investing in quality materials that can hold up to the greater demands of AI compute.